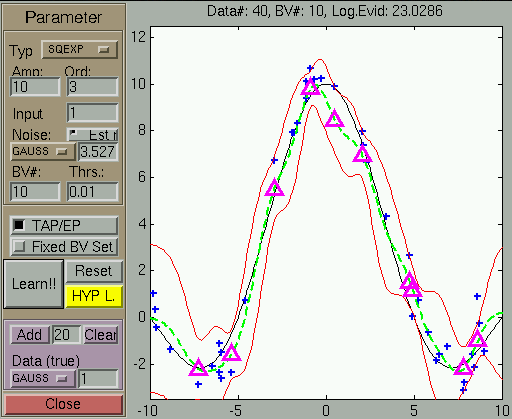

Regression example

In a first example program (

demogp_reg_gui),

one generates noisy realisations of the sinc

function. The type and amplitude of the noise and the model

parameters can be set manually.

Using the default settings and generating 40 training points, the

result of learning (before the hyper-parameter adaptation) is

visualised in the image below.

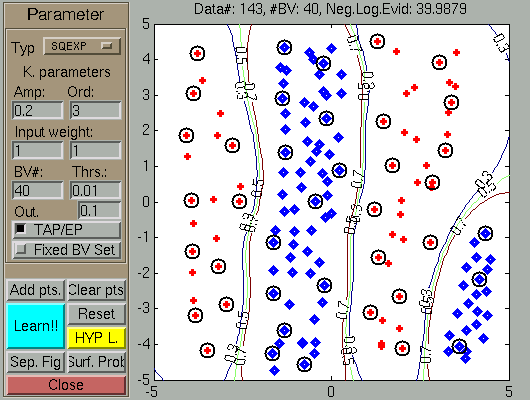

Classification example

Similarly to the previous section, the program (

demogp_class_gui)

illustrates the Sparse GP inference for binary classification.